Posted by Google Creative Lab

“Bad Guy” by Billie Eilish is one of the most-covered songs on YouTube, inspiring thousands of fans to upload their own versions. To celebrate all these covers, YouTube and Google Creative Lab built an AI experiment to combine all of them seamlessly in the world’s first infinite music video: Infinite Bad Guy. The experience aligns every cover to the same beat, no matter its genre, language, or instrumentation.

Finding all the covers

How do you find “Bad Guy” covers amidst all the billions of videos on YouTube? Just searching for “Bad Guy” would result in false positives, like videos of Billie being interviewed about the song, or miss covers that didn’t use the song name in their titles. YouTube’s ContentID system allows us to find videos that match the musical composition “Bad Guy” and also allows us to narrow our search to videos that appear to be performances or creative interpretations of the song. That way, we can also avoid videos where “Bad Guy” was just background music. We continue to run this search daily, collecting an ever-expanding list of potential covers to use in the experience.

Aligning all the covers to the same beat

A key part of the experience is being able to jump from cover to cover seamlessly. But fan covers of “Bad Guy” vary widely. Some might be similar to the original, like a dance video set to Billie’s track. Some might vary more in tempo and instrumentation, like a heavy metal cover. And others might diverge greatly from the original, like a clarinet version with no lyrics. How can you get all these covers on the same beat? After trying several approaches like dynamic time warping and chord recognition, we’ve found the most success with a recurrent neural network trained to recognize sections and beats of “Bad Guy.” We collaborated with our friends at IYOYO on cover alignment and they have a great writeup about the process.

Building the experience

Finding and aligning the covers is a fascinating research problem, but the crucial final step is making them explorable to everyone. We’ve tried to make it intuitive and fun to navigate all the infinite combinations, while keeping latency low so the song never drops a beat.

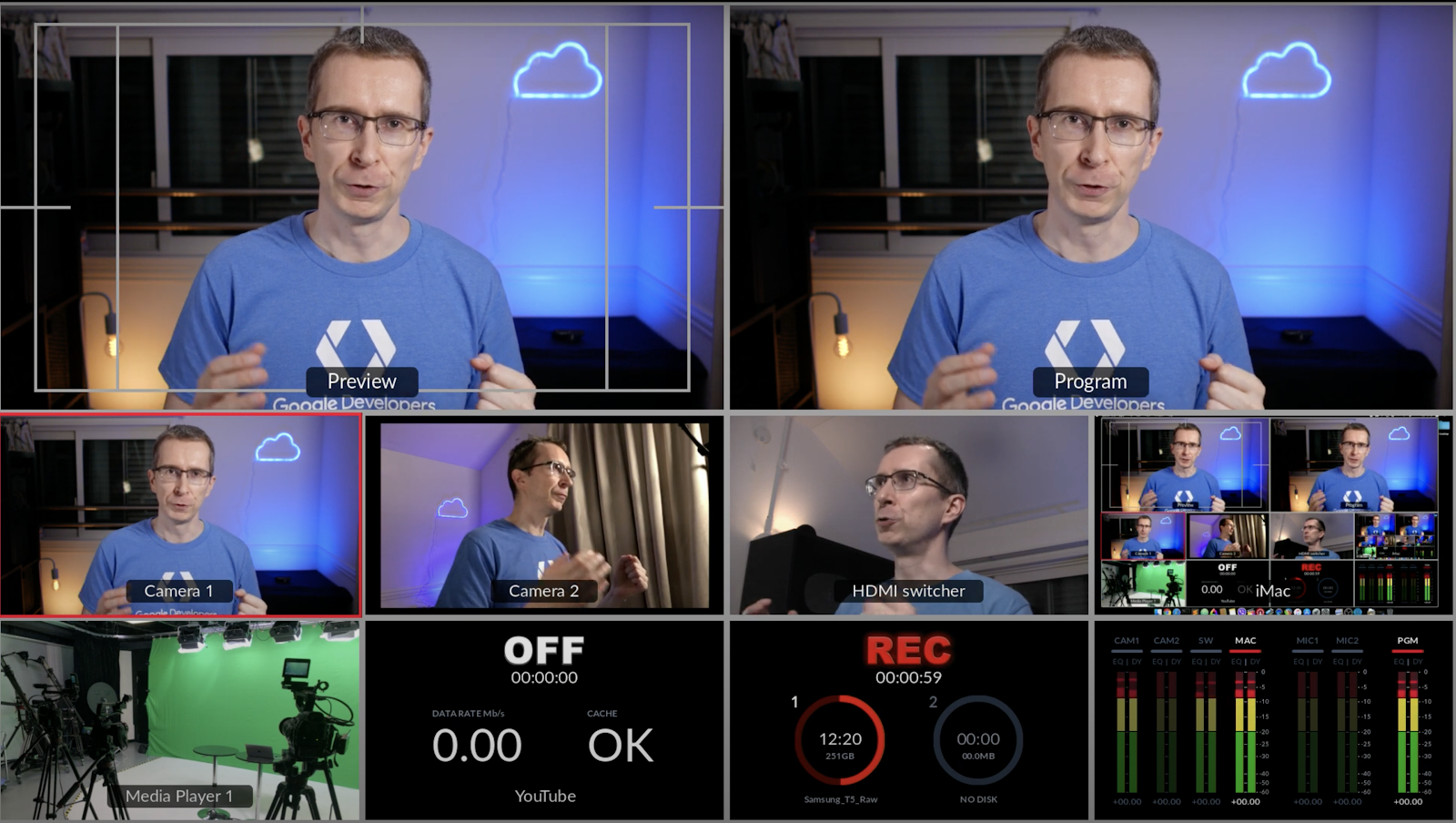

The experience centers around three YouTube players, a number we settled on after a lot of experimentation. Initially we thought more players would be more interesting, but the experience got chaotic and slow. Around the players we’ve added discoverable features like the hashtag drawer and stats page. Video game interfaces have been a big inspiration for us, as they combine multiple interactions in a single dashboard. We’ve also added an autoplay mode for users who want to just sit back and be taken through an ever-changing mix of covers.

We’re excited about how Infinite Bad Guy showcases the incredibly diverse talent of YouTube and the potential machine learning can have for music and creativity. Give it a try and see what beautiful, strange, and brilliant covers you can find.